Why your app feels slow and how we fixed it with PowerSync

How we moved away from request-wait-response screens, shifted routine reads to local SQLite, and improved perceived speed with PowerSync.

- PowerSync

- local-first

- SQLite

The honeymoon phase of every MVP

You probably know this phase. While you're building an MVP, everything feels fast.

One user. An empty database. A quick server. Simple screens. The user clicks a button, the frontend sends a request, the backend responds, and the UI updates. The whole thing is easy to understand.

At that point, it's tempting to believe the architecture will handle growth just fine. Queries are fast. Tables are small. User flows are simple. A form saves almost instantly. A list opens right away.

Then the product starts behaving like a real product.

Lists get longer. Filters become more complex. Analytics appears. Entities become connected. Mobile usage grows. People use the product on multiple devices. The number of users goes up.

And suddenly the loader becomes part of the workflow. The user opens a list and waits. Changes a field and waits again. The internet looks fine, the server is alive, but the product feels sluggish.

That's a frustrating moment. Especially when, technically, everything looks correct.

The usual treatment

In that situation, we usually follow a familiar path.

We check PostgreSQL indexes. Add pagination. Cache endpoints. Move heavy calculations away from hot paths. Run EXPLAIN ANALYZE. Remove unnecessary JOINs. Split large queries into smaller ones. Optimize serializers. Add debounce on the frontend.

All of that matters. It often helps.

But in our case, the problem was not just a slow backend. The problem was the request-and-wait architecture itself.

The classic flow looked like this:

click -> request -> wait -> response -> update UIAs long as the network and the backend are fast, this works. But once the connection gets flaky, the server takes a bit longer, or the database spends time on a heavier query, the interface becomes hostage to the response.

The user can't move on until the app hears back. Every action becomes a small negotiation with the network.

At some point we decided we didn't want to keep fighting that same loop, so we took a different route.

Local-first: data is always close

We moved to an architecture where the interface reads primarily from a local SQLite database on the user's device.

Important disclaimer: the backend did not disappear.

It still handles authentication, permissions, business rules, validation, and long-term consistency. PostgreSQL remains the central database. But React no longer has to call an API every time it needs to show a list, apply a filter, or update a field on screen.

The flow became:

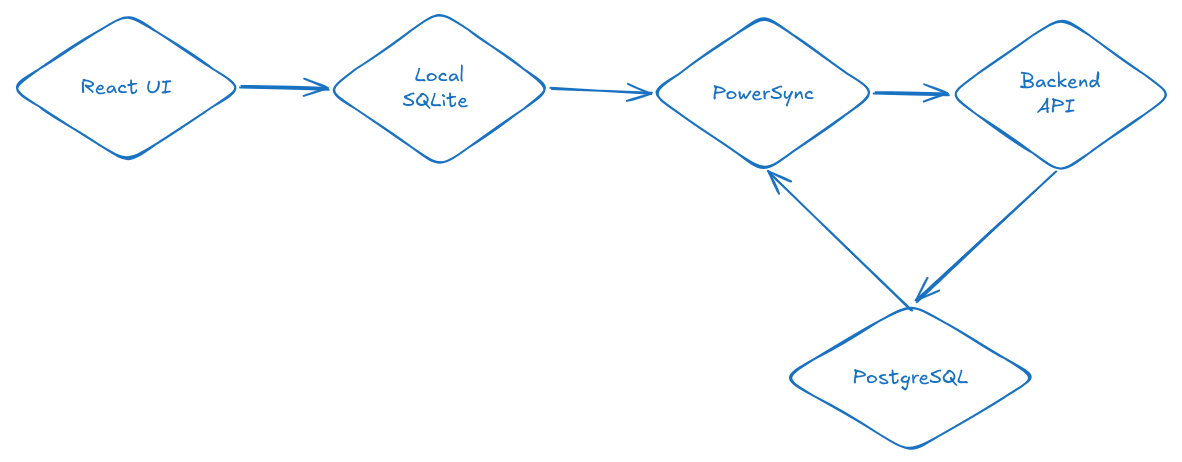

React UI -> Local SQLite -> PowerSync -> Backend -> PostgreSQLNow, when the user saves something, the record is written to the local database first. The UI updates almost immediately, and PowerSync sends the change to the backend in the background.

click -> local write -> update UI -> sync in backgroundThe network still matters. It just no longer sits between the user and the interface.

That was the real shift. We were not trying to shave a few milliseconds off one endpoint. We were removing the network from the main interaction loop.

How it works inside

The frontend works with local SQLite through PowerSync. Components don't need a screen-specific API for every read. They read through hooks or a DAL layer that runs SQL against the local database.

The backend also changes its role. It is no longer the layer that returns JSON for every render. It becomes the place where permissions, constraints, relationships, and incoming upload operations are checked.

PowerSync handles synchronization. It delivers data to the client, keeps local SQLite up to date, and sends local changes back.

We don't download the whole database

The first question is usually obvious: does the whole database end up on the user's device?

No.

A key part of PowerSync is partial replication. The client receives only the rows that user is allowed to access.

For example, if a user belongs to several workspaces, they receive data only for those workspaces. Everything else never reaches the device.

A simplified sync_rules.yaml:

bucket_definitions:

by_workspace:

parameters: |

SELECT workspace_id

FROM workspace_memberships

WHERE user_id = request.user_id()

data:

- SELECT * FROM records WHERE workspace_id = bucket.workspace_id

- SELECT * FROM categories WHERE workspace_id = bucket.workspace_idThis is not the same as downloading everything and hiding the extra rows in the frontend. The extra rows are never synchronized.

That gives us two useful properties.

First, users don't physically receive data that belongs to someone else.

Second, the backend and PostgreSQL are involved in fewer routine reads. Lists, sorting, filters, and some analytics can run locally.

For example, a list screen can open with a regular SQL query:

SELECT *

FROM records

WHERE workspace_id = ?

ORDER BY created_at DESC

LIMIT 50;If we need an index, that index can live locally too:

CREATE INDEX records_workspace_created_at_idx

ON records (workspace_id, created_at);This is a real database near the user. Not a backup cache, but a proper data source for the interface.

This is where perceived speed starts to change. Opening a list no longer depends on a round trip to the server. A filter does not become another API request. Sorting does not wait for a database on the other side of the network. Some analytics can happen right on the device.

To the user, this does not feel like "we optimized a query." It feels like a different product. The screen appears immediately. Changes are visible immediately. Transitions feel calmer. Loaders disappear from places where they used to feel unavoidable.

The backend and PostgreSQL are still important. They handle synchronization, initial loading, permission checks, and persistence. But routine reads no longer go through the API every time.

A separate token for sync

We separated normal application authorization from access to the synchronization layer.

PowerSync uses a separate short-lived JWT. The client calls the regular API, the backend validates the user, and then issues a token specifically for sync.

class GetPowerSyncToken(APIView):

permission_classes = [IsAuthenticated]

def get(self, request):

token = create_powersync_jwt(str(request.user.id))

return Response({

"token": token,

"powersync_url": settings.POWERSYNC_URL,

})PowerSync checks the claims in that token and uses them when applying sync rules.

This split turned out to be convenient. The normal app session has its own lifecycle. Sync gets a separate short-lived pass.

Local mutations

The biggest frontend change was mental: saving no longer meant "send a POST request right now."

The local database changes first.

await powerSync.writeTransaction(async (tx) => {

await tx.execute(

`INSERT INTO records (id, workspace_id, amount, created_at)

VALUES (?, ?, ?, ?)`,

[id, workspaceId, amount, createdAt]

);

await tx.execute(

`UPDATE categories

SET usage_count = COALESCE(usage_count, 0) + 1

WHERE id = ?`,

[categoryId]

);

});One local transaction can update several related entities.

Create a record. Recalculate local state. The UI immediately sees a consistent picture.

The user does not need to wait for server confirmation. They see the result right away, and synchronization catches up in the background.

This changes the feel of the product a lot. Even if the performance numbers do not look dramatic, the perceived speed improves.

Upload is a separate pipeline

Offline changes how people use the product.

A user can update the same record several times before the connection comes back.

update title

update amount

update category

update title againIf every intermediate state is sent to the server, the backend gets a lot of noise. In most cases, it needs the final version of the row, not the full history of how the user got there.

So before upload, we compact the queue.

const transaction = await database.getNextCrudTransaction();

const byKey = new Map();

for (const item of transaction.crud || []) {

const key = `${item.table}::${item.id}`;

const previous = byKey.get(key);

byKey.set(

key,

previous ? mergeOperations(previous, item) : item

);

}

const batch = [...byKey.values()];

await postBatchWithRetries(uploadUrl, batch);

await transaction.complete();We group operations by row and send only what actually needs to be applied on the server.

Fewer redundant operations. Fewer retries. Fewer strange edge cases when the network comes back.

But the upload queue has to be treated as a real part of the system. You need to know which errors can be retried, which ones are final, how to handle partial success, and what happens when one operation keeps blocking the queue.

The backend is still in charge

Local-first does not mean the frontend becomes trusted.

Yes, the user writes data locally first. Yes, the UI updates immediately. But the backend still validates every operation that arrives from the queue.

for index, operation in enumerate(batch):

try:

with transaction.atomic():

action = operation["op"]

table = operation["table"]

row_id = operation["id"]

data = operation.get("data", {})

if action == "PUT":

apply_put(table, row_id, data)

elif action == "PATCH":

apply_patch(table, row_id, data)

elif action == "DELETE":

apply_delete(table, row_id)

else:

raise ValidationError("Unsupported operation")

except ValidationError as exc:

errors.append({

"index": index,

"table": operation.get("table"),

"id": operation.get("id"),

"retryable": False,

"detail": str(exc),

})Permissions, limits, relationships, field correctness, and operation validity all stay on the server.

PowerSync helps move changes around. It should not become a shortcut around business logic or security.

Cross-platform became simpler

Another practical benefit: the same code can be used across platforms.

In our case, the same frontend approach works for web, PWA, Android TWA, and an iOS WebView wrapper. The shells are different, but the data logic stays shared.

Platform details don't disappear. Storage, permissions, lifecycle, push notifications, and background behavior still matter, especially on mobile.

But the data approach does not have to be rewritten for every platform.

Reads are local. Writes are local. Sync runs in the background.

For users, this feels closer to a native app, even though the interface is still built with a web stack.

Minimal self-hosted deployment

You can run this kind of architecture with Docker Compose.

At minimum, you need frontend, backend, PowerSync, and PostgreSQL.

services:

frontend:

build:

context: ./frontend

ports:

- "4173:4173"

backend:

build:

context: ./backend

ports:

- "8000:8000"

powersync:

build:

context: ./powersync

command: ["start", "-r", "unified"]

ports:

- "7001:7001"

volumes:

- ./powersync/config:/config

postgres:

image: postgres:16

environment:

POSTGRES_DB: app

POSTGRES_USER: app

POSTGRES_PASSWORD: change_meA simplified PowerSync config:

replication:

connections:

- type: postgresql

uri: !env PS_DATA_SOURCE_URI

sslmode: disable

storage:

type: postgresql

uri: !env PS_STORAGE_PG_URI

sslmode: disable

sync_rules:

path: sync_rules.yaml

client_auth:

jwks_uri: !env PS_JWKS_URL

audience:

- !env PS_AUDIENCE- PS_DATA_SOURCE_URI points to the main PostgreSQL database.

- PS_STORAGE_PG_URI is used for PowerSync's own storage.

- PS_JWKS_URL lets PowerSync validate JWTs.

The other side

If you're still skeptical about local-first, that's a good instinct.

This is not free speed. It is an architectural choice that solves some problems and brings others.

The first thing to decide is conflicts. If two users edit the same row while offline, you need to know what should happen during sync. Sometimes Last Write Wins is enough. Sometimes it is the wrong choice, because the last write can erase important data. More sensitive parts of the product need domain-specific merge logic.

The second risk area is migrations. The local database lives on the user's device. A client may not open the app for a month. During that time you may change the schema, add fields, rename tables, or remove old columns. When that client comes back, local SQLite has to survive the new version. Sometimes a normal migration is enough. Sometimes you need recovery. Sometimes the safest move is to rebuild local state and sync again.

Another source of pain is sync_rules.yaml. Sometimes a row exists in PostgreSQL but does not appear on the client. The cause can be JWT claims, a bucket, workspace_id, a rule that is too narrow, or data that landed in the wrong set. These issues are not always hard, but they require discipline. You need good logs.

And then there is the mental complexity. Classic API architecture is easier to understand: click a button, send a request, receive a response, show the result. In a local-first system, state lives in several places. There is a local database. There is an upload queue. There is the server. There is replication. There is a moment when the user already sees a local change, but the server has not accepted it yet. You can live with that. But it requires careful design and good debugging tools.

Was it worth it?

For us, yes.

Moving to PowerSync and a local-first approach did not just make the product faster. It changed how the interface feels.

The user presses a button and sees the result immediately. A list opens without waiting for an API. Filters do not turn into a chain of server requests. The mobile app handles a flaky connection more calmly.

When the network stops being part of every action, the product starts to feel different.

We use this approach in the product:

Next time, I will write about how we added End-to-End Encryption on top of this local database, so even we cannot see what users store.